Data Science With Python Core Skills

Learning Path ⋅ Skills: Pandas, NumPy, Data Cleaning, Data Visualization, Statistics

Data science with Python starts with knowing how to load, clean, and explore real datasets. This learning path gives you the core skills to do that confidently.

By completing this path, you’ll be able to:

- Set up a Jupyter Notebook environment and work with pandas DataFrames

- Read, clean, group, and combine datasets using pandas

- Create static and interactive visualizations with Matplotlib, pandas, and Bokeh

- Use NumPy for array operations, random data generation, and statistics

- Apply correlation analysis and statistics fundamentals to real data

This path is for Python developers who want to start working with data professionally. You should be comfortable with Python basics.

You’ll move from environment setup through data wrangling and visualization, finishing with statistics and NumPy techniques.

Data Science With Python Core Skills

Learning Path ⋅ 21 Resources

Getting Started

Set up your data science environment and get introduced to pandas, Python’s most popular data analysis library.

Course

Using Jupyter Notebooks

Learn how to get started with the Jupyter Notebook, an open source web application that you can use to create and share documents that contain live code, equations, visualizations, and text.

Course

Introduction to pandas

Learn pandas DataFrames: explore, clean, and visualize data with powerful tools for analysis. Delete unneeded data, import data from a CSV file, and more.

Working With Data in pandas

Learn how to read, explore, and work with tabular data using pandas DataFrames. You’ll read CSV and JSON files, inspect datasets, and perform common operations.

Course

Explore Your Dataset With pandas

Learn how to start exploring a dataset with pandas and Python. You'll learn how to access specific rows and columns to answer questions about your data. You'll also see how to handle missing values and prepare to visualize your dataset in a Jupyter Notebook.

Course

Reading and Writing CSV Files

This short course covers how to read and write data to CSV files using Python's built in csv module and the pandas library. You'll learn how to handle standard and non-standard data such as CSV files without headers, or files containing delimeters in the data.

Interactive Quiz

Reading and Writing CSV Files in Python

Course

Working With JSON in Python

Learn how to work with Python's built-in json module to serialize the data in your programs into JSON format. Then, you'll deserialize some JSON from an online API and convert it into Python objects.

Interactive Quiz

Working With JSON Data in Python

Course

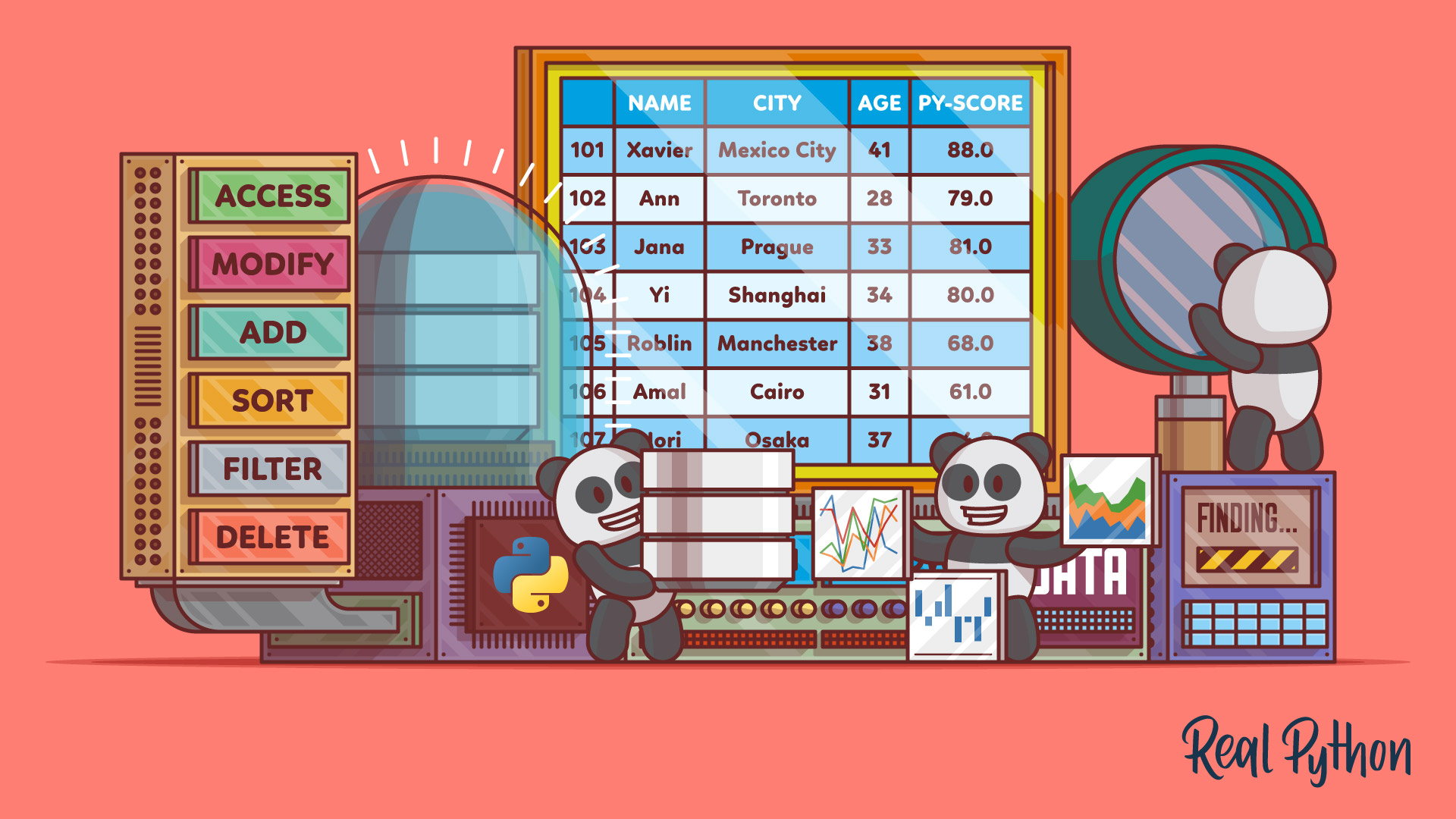

The pandas DataFrame: Working With Data Efficiently

In this course, you'll get started with pandas DataFrames, which are powerful and widely used two-dimensional data structures. You'll learn how to perform basic operations with data, handle missing values, work with time-series data, and visualize data from a pandas DataFrame.

Interactive Quiz

The pandas DataFrame: Make Working With Data Delightful

Manipulating and Cleaning Data

Learn how to clean, group, and combine datasets in pandas. You’ll also pick up idiomatic pandas patterns that make your code more efficient.

Course

Data Cleaning With pandas and NumPy

Learn how to clean up messy data using pandas and NumPy. You'll become equipped to deal with a range of problems, such as missing values, inconsistent formatting, malformed records, and nonsensical outliers.

Course

pandas GroupBy: Grouping Real World Data in Python

Learn how to work adeptly with the pandas GroupBy while mastering ways to manipulate, transform, and summarize data. You'll work with real-world datasets and chain GroupBy methods together to get data into an output that suits your needs.

Course

Combining Data in pandas With concat() and merge()

Learn two techniques for combining data in pandas: merge() and concat(). Combining Series and DataFrame objects in pandas is a powerful way to gain new insights into your data.

Course

Idiomatic pandas: Tricks & Features You May Not Know

In this course you'll see how to use some lesser-used but idiomatic pandas capabilities that lend your code better readability, versatility, and speed.

Visualizing Your Data

Create plots, charts, and interactive visualizations from your data. You’ll work with Matplotlib, pandas plotting, and Bokeh.

Course

Python Plotting With Matplotlib

In this beginner-friendly course, you'll learn about plotting in Python with matplotlib by looking at the theory and following along with practical examples.

Course

Plot With pandas: Python Data Visualization Basics

In this course, you'll get to know the basic plotting possibilities that Python provides in the popular data analysis library pandas. You'll learn about the different kinds of plots that pandas offers, how to use them for data exploration, and which types of plots are best for certain use cases.

Course

Interactive Data Visualization With Bokeh and Python

This course will get you up and running with Bokeh, using examples and a real-world dataset. You'll learn how to visualize your data, customize and organize your visualizations, and add interactivity.

Course

Histogram Plotting in Python: NumPy, Matplotlib, Pandas & Seaborn

In this course, you'll be equipped to make production-quality, presentation-ready Python histogram plots with a range of choices and features. It's your one-stop shop for constructing and manipulating histograms with Python's scientific stack.

Statistics and NumPy

Explore descriptive statistics, random data generation, and correlation analysis. You’ll also learn NumPy’s array programming model for efficient numerical computation.

Tutorial

Python Statistics Fundamentals: How to Describe Your Data

Learn the fundamentals of descriptive statistics and how to calculate them in Python. You'll find out how to describe, summarize, and represent your data visually using NumPy, SciPy, pandas, Matplotlib, and the built-in Python statistics library.

Course

Generating Random Data in Python

See several options for generating random data in Python, and then build up to a comparison of each in terms of its level of security, versatility, purpose, and speed.

Tutorial

NumPy, SciPy, and pandas: Correlation With Python

Learn what correlation is and how you can calculate it with Python. You'll use SciPy, NumPy, and pandas correlation methods to calculate three different correlation coefficients. You'll also see how to visualize data, regression lines, and correlation matrices with Matplotlib.

Tutorial

Look Ma, No for Loops: Array Programming With NumPy

How to take advantage of vectorization and broadcasting so you can use NumPy to its full capacity. In this tutorial you'll see step-by-step how these advanced features in NumPy help you writer faster code.

Course

NumPy Techniques and Practical Examples

Learn how to use NumPy by exploring several interesting examples. You'll read data from a file into an array and analyze structured arrays to perform a reconciliation. You'll also learn how to quickly chart an analysis and turn a custom function into a vectorized function.

Interactive Quiz

NumPy Practical Examples: Useful Techniques

Tutorial

Data Engineer Interview Questions With Python

This tutorial will prepare you for some common questions you'll encounter during your data engineer interview. You'll learn how to answer questions about databases, ETL pipelines, and big data workflows. You'll also take a look at SQL, NoSQL, and Redis use cases and query examples.

Test Your Knowledge

You’ve made it through the entire path! In the wrap-up quiz below, you’ll revisit the most important ideas about CSV and JSON file handling, pandas DataFrames, and NumPy arrays. Use it to spot any topics that still feel rusty before moving on.

Interactive Quiz

Data Science With Python Core Skills

Test your data science core skills in Python, including CSV and JSON file handling, pandas DataFrames, and NumPy arrays.

Congratulations on completing this learning path! You’ve built a solid foundation in Python data science using pandas, NumPy, and visualization libraries like Matplotlib and Bokeh.

You might also be interested in these related learning paths:

Got feedback on this learning path?

Looking for real-time conversation? Visit the Real Python Community Chat or join the next “Office Hours” Live Q&A Session. Happy Pythoning!